Introduction

In modern web applications and distributed systems, APIs are constantly exposed to a large number of requests from users, services, and sometimes malicious actors. Without proper control, this traffic can overwhelm servers, degrade performance, and even lead to system outages.

To prevent such scenarios, systems implement rate limiting, a technique used to control the number of requests a client can make within a specific time frame. It acts as a protective layer that ensures fair usage, system stability, and security.

For example, consider a public API that allows users to fetch data. Without rate limiting, a single client could send thousands of requests per second:

for (let i = 0; i < 10000; i++) {

fetch(“/api/data”);

}

This can overload the server and affect other users. Rate limiting prevents this by restricting how many requests are allowed within a given time window.

What is Rate Limiting?

Rate limiting is a mechanism used to restrict the number of requests a client can make to a server within a defined time period.

For instance, an API might allow:

- 100 requests per minute per user

- 1000 requests per hour per IP

If a client exceeds this limit, the server responds with an error, typically:

HTTP/1.1 429 Too Many Requests

In a simple Node.js example, rate limiting can be implemented using middleware:

const rateLimit = require(“express-rate-limit”);

const limiter = rateLimit({

windowMs: 60 * 1000, // 1 minute

max: 100

});

app.use(“/api”, limiter);

This ensures that no client can make more than 100 requests per minute to the API.

How Rate Limiting Works

Rate limiting works by tracking the number of requests made by a client and enforcing limits based on predefined rules.

Step 1: Identify the Client

Clients are identified using IP addresses, API keys, or user tokens:

const clientId = req.ip;

Step 2: Track Requests

The system keeps track of request counts:

requestCounts[clientId] = (requestCounts[clientId] || 0) + 1;

Step 3: Enforce Limits

If the request count exceeds the threshold:

if (requestCounts[clientId] > 100) {

return res.status(429).send(“Too Many Requests”);

}

Step 4: Reset Counters

Counters are reset after a time window:

setTimeout(() => {

requestCounts[clientId] = 0;

}, 60000);

In production systems, tools like Redis are used to store request counts efficiently.

Why Rate Limiting is Important

Rate limiting is essential for maintaining system stability and security.

Prevents Abuse

Protects APIs from malicious attacks such as brute force or DDoS.

Ensures Fair Usage

Prevents a single client from consuming all resources.

Improves Performance

Reduces server load and ensures consistent response times.

Enhances Security

Limits repeated login attempts and protects sensitive endpoints.

Supports Scalability

Helps systems handle increasing traffic without degradation.

Common Rate Limiting Algorithms

Different algorithms are used to implement rate limiting effectively.

Fixed Window

Counts requests in fixed time intervals:

if (requestsInCurrentMinute > limit) {

blockRequest();

}

Simple but can cause bursts at window boundaries.

Sliding Window

Tracks requests over a rolling time window:

timestamps = timestamps.filter(t => t > now – window);

More accurate but slightly complex.

Token Bucket

Allows requests as long as tokens are available:

if (tokens > 0) {

tokens–;

allowRequest();

}

Tokens are replenished over time.

Leaky Bucket

Processes requests at a fixed rate:

queue.push(request);

processQueueAtFixedRate();

Smooths traffic spikes.

Rate Limiting in APIs and Microservices

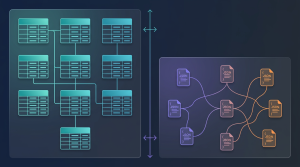

In microservices architectures, rate limiting is applied at multiple levels.

API Gateway Level

Rate limiting is often enforced at the gateway:

app.use(“/api”, limiter);

This protects backend services from excessive traffic.

Service-Level Rate Limiting

Each microservice can enforce its own limits:

if (userRequests > limit) {

rejectRequest();

}

Distributed Rate Limiting

Using Redis for shared counters:

const redis = require(“redis”);

const client = redis.createClient();

client.incr(“user:1:requests”);

This ensures consistency across multiple instances.

Benefits of Rate Limiting

- Protects systems from overload

- Improves API reliability and uptime

- Ensures fair resource distribution

- Enhances security against attacks

- Enables predictable system performance

- Supports multi-tenant architectures

You may also like : Sliding Window Log Rate Limiter: A Precision Approach to Request Management

Challenges of Rate Limiting

Distributed Systems Complexity

Maintaining counters across multiple nodes can be difficult.

False Positives

Legitimate users may get blocked during high usage.

Choosing the Right Limits

Too strict limits affect usability, while loose limits reduce effectiveness.

Performance Overhead

Tracking requests adds additional processing overhead.

When to Use Rate Limiting

Rate limiting should be used in scenarios such as:

- Public APIs

- Authentication endpoints

- Payment systems

- High-traffic applications

- Microservices communication

Example for login protection:

if (loginAttempts > 5) {

blockUser();

}

Conclusion

Rate limiting is a critical technique for managing traffic, protecting systems, and ensuring fair usage in modern applications. By controlling the number of requests a client can make within a defined time frame, it helps prevent abuse, reduces the risk of system overload, and improves overall application performance.

In real-world systems, rate limiting also plays a key role in maintaining service reliability and user experience. Without it, a sudden spike in traffic or misuse by a few clients can degrade performance for all users. By enforcing limits, organizations can ensure that resources are distributed fairly and that critical services remain available even under high demand.

While implementing rate limiting requires careful consideration of algorithms, thresholds, and system design, the benefits far outweigh the challenges. Selecting the right strategy, such as token bucket or sliding window, depends on the specific use case and traffic patterns. Additionally, integrating rate limiting with caching, monitoring, and logging systems can further enhance its effectiveness.

In distributed systems and microservices architectures, rate limiting becomes even more important. It helps manage inter-service communication, prevents cascading failures, and ensures that no single service becomes a bottleneck. When combined with API gateways and centralized control mechanisms, it provides a scalable way to handle growing traffic.

When implemented correctly, rate limiting helps build robust, reliable, and secure APIs that can handle real-world traffic efficiently while maintaining consistent performance and availability.

Frequently Asked Questions

What is rate limiting in simple terms?

Rate limiting is a technique that restricts how many requests a user or client can make within a specific time period. It acts as a control mechanism to prevent excessive usage and ensures fair access to system resources for all users.

How does rate limiting work?

It works by tracking the number of requests made by a client, typically using identifiers such as IP addresses, API keys, or user tokens. Once the number of requests exceeds a predefined limit within a time window, further requests are temporarily blocked or delayed until the limit resets.

Why is rate limiting important in APIs?

Rate limiting is important because it prevents abuse, ensures fair usage among users, improves system performance, and protects APIs from overload or malicious attacks. It also helps maintain system stability during traffic spikes and ensures consistent service availability.

What are common rate limiting techniques?

Common techniques include fixed window, sliding window, token bucket, and leaky bucket algorithms. Each approach has its own trade-offs in terms of accuracy, performance, and complexity, and the choice depends on the application’s requirements and traffic behavior.